Jeremy Reimer/Waldemar Brandt/NASA

It started, as did many things in the ARM story, with Apple.

Steve Jobs had returned, triumphantly, to the company he had co-founded. The release of the colorful gumdrop iMacs in 1998, an agreement with Microsoft, and the sale of Apple’s ARM stock had brought the company from near-bankruptcy to a solid financial footing. But Apple’s “iCEO” was still searching for the next big thing.

Jobs had equipped the iMacs with a new connector called FireWire, which enabled fast transfers of video and sound. A file format called MP3 was becoming popular for computer users to share music on their computers, and companies had already started making portable MP3 players. But these devices had tiny amounts of storage, slow USB 1.0 transfer speeds, and terrible software. Jobs became obsessed with the idea of making a player and devoted almost all of his time to the project.

Apple partnered with a company called PortalPlayer, which had been working on its own player. The hardware used a custom ARM chip, the PP5502. It was a system on a chip with dual ARM7 cores running at 90 MHz with 32MB of onboard memory. The only other large chip on the motherboard was a FireWire controller. The flexibility of ARM licensing made it easy to design a CPU that had custom circuitry for things like MP3 decoding.

Elite Obsolete Electronics

How easy? An acquaintance, Dr. John Sims, told me the story of another MP3 player company from around the same time. It took a single engineer just six months to add a Digital Signal Processor (DSP) to the standard ARM design. A rival firm that was building chips from scratch rather than partnering with ARM had 60 engineers, and the project took three times as long.

The iPod shipped in 2001, and after a Windows-compatible version was released, the little music player became the industry standard. At the device’s peak, over 50 million iPods were sold each year. While people were enamored with its interface, ease of use, and iconic white headphones, most failed to realize that the iPod was actually a tiny computer. It had a CPU, memory, a miniature hard drive, and an operating system, and its touch wheel and buttons were like a little mouse and keyboard. It even had a bitmap display that could play simple games.

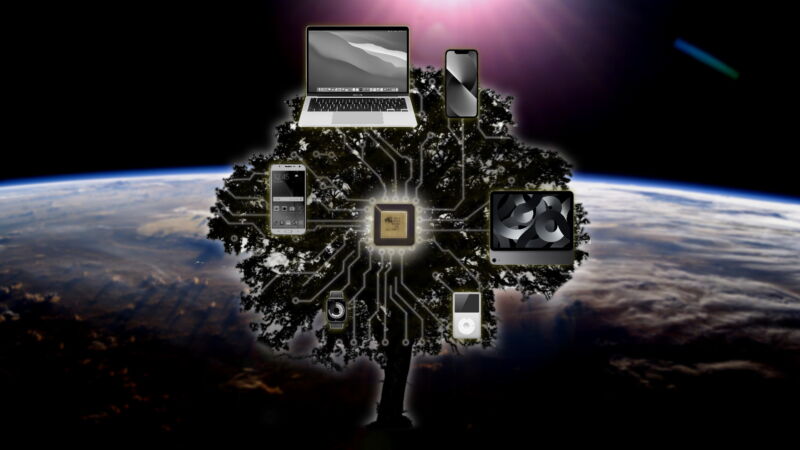

Speaking of games, ARM’s second big win in 2001 came in the form of Nintendo’s Game Boy Advance. The successor to the original Game Boy, it shipped with a 16.8 MHz ARM7 core with embedded memory. It also had a Sharp LR35902 for compatibility with the old system. Even portable game consoles were making the jump from CISC to RISC chips.

Evan-Amos (Wikipedia)

Pocket computers for everyone

The iPod was just the start of what would be an epoch-changing period in the mobile world. After a strange and ultimately doomed dalliance with Motorola to put an iPod inside the ROKR flip phone, Apple had set its sights on making a new phone from scratch.

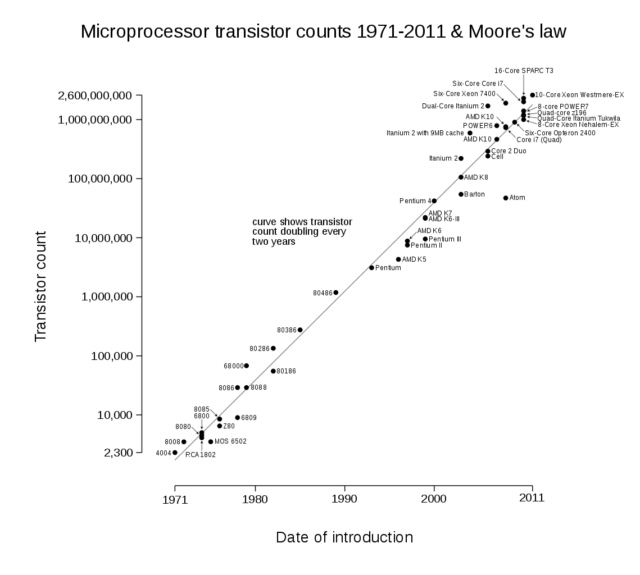

The project began in 2004. Jobs wasn’t sure whether the correct approach was to scale up the iPod to turn it into a phone or strip down the Macintosh’s OS X operating system to let it run on a mobile device. To settle the matter, Jobs had rival teams work on both approaches simultaneously. Tony Fadell’s iPod team was experienced, but they were facing the wrong side of Moore’s Law.

Wgsimon (Wikipedia)

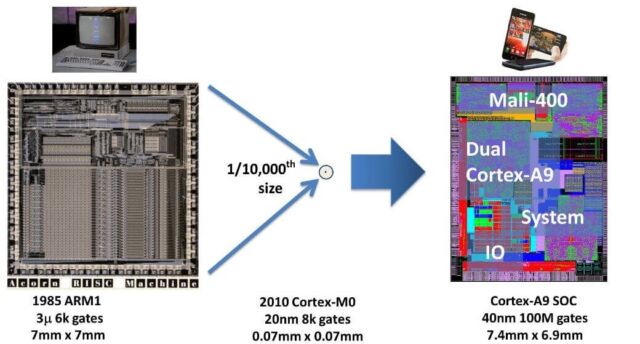

The ARM chip had come a long way from its first version in 1985. That chip, with 27,000 transistors, was produced on a 3-micrometer process. That meant that transistors and wires were roughly 0.000003 meters across, or 0.003 millimeters. That may seem small, but advances in silicon chip manufacturing meant that by 2006, chip foundries were using 90-nanometer processes. This allowed many more transistors, including boatloads of fast cache memory, to fit on the same size chip. It also meant that the chips could run at a higher clock speed.

Software, in contrast, improved much more slowly. It took time to write and test—and to fix the inevitable swarm of bugs introduced with every new feature. So it was actually quicker to wait for Moore’s Law to make mobile chips available that could run existing OS X software than it was to add all the necessary features to the iPod’s barebones operating system. Jobs decided to go with the stripped-down OS X approach. But there was still the question of who would make the chips.

Jobs asked the CEO of Intel, Paul Otellini, if he wanted to bid on the right to make chips for Apple’s upcoming phone. The manufacturing giant was riding high on sales of desktop x86 CPUs that powered Windows-based computers. However, it also owned an ARM-based business, XScale, that it had purchased from Digital Equipment Corporation (DEC) in 1998. So Intel could have easily fulfilled Apple’s request.

But Otellini turned the offer down. He calculated that the maximum amount Apple was willing to pay per CPU was less than Intel would spend to make them, and he wasn’t certain that an Apple phone would sell in high volumes. Plus, he was nervous about showing support for XScale, especially as Intel was working on Atom, its upcoming low-power version of x86. He doubled down on x86 and sold the XScale division in 2006.

There was a certain irony here. DEC had originally sold its ARM business because it needed the money. It needed the money because Intel was laying waste to DEC’s minicomputer and workstation market. Cheaper x86-based PCs, produced at higher volumes, had made these larger computers less and less competitive over time. Now, Intel was giving up the same mobile chip division to focus on the desktop.

After Intel turned down the deal, Apple turned to Samsung. The South Korean conglomerate agreed to manufacture a powerful new ARM chip for Apple’s upcoming phone. It was the S5L8900, an SoC with an ARM11 core running (underclocked!) at 412 MHz, 128MB of RAM, up to 16GB of storage, and an integrated PowerVR MBX Lite 3D graphics processor. It was a remarkable chip, evocative of the ARM 250 “Archimedes on a chip” from 1991, but it was powerful enough to be the heart of a decent desktop machine from the turn of the century.

But it wasn’t a desktop machine. It was a phone—and a revolutionary phone at that. Jobs announced the iPhone at Macworld on January 9, 2007. Rewatching the announcement today, it feels like a turning point in history. Curiously, Jobs spent a lot of time emphasizing how the iPhone was actually three devices: a phone, an iPod, and an Internet communicator.

Steve Jobs unveils the original iPhone.

Nobody would describe an iPhone like that now. It’s a computer that fits in your pocket. Mainframe computers were the size of rooms, minicomputers were the size of fridges, and microcomputers were the size of toasters. These new devices could easily have been called nanocomputers. Instead, we call them smartphones, even though many people rarely use the phone part anymore.

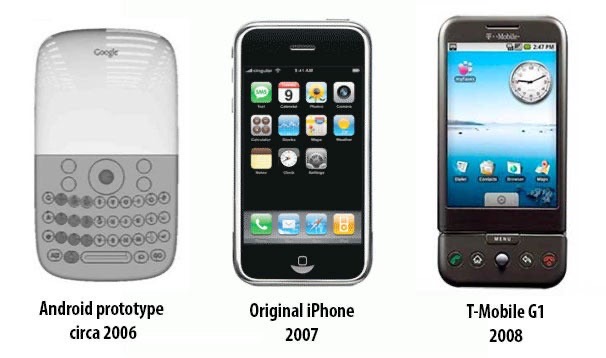

After the announcement, Google’s Android subsidiary quickly changed its product plans from producing a Blackberry clone to making something more closely resembling an iPhone. The T-Mobile G1, released in 2008, also ran on ARM. It unleashed a flood of Android devices, all converging on the same form factor of a thin black rectangle with a single large touchscreen. Aside from the iPhone and Android, all other smartphone platforms fell by the wayside, and phones that weren’t smartphones quickly became extinct.

The rapid iteration of Android.

PCWorld

The chips come full circle

In 2008, Apple purchased P.A. Semi for $278 million. The company employed 150 engineers and designed power-efficient PowerPC CPUs. Many folks wondered why Apple bought a PowerPC company, especially since it had transitioned the Macintosh from PowerPC to Intel x86 processors in 2005.

But the engineers at PA Semi knew about more than just the PowerPC. They included the lead designer for the DEC’s Alpha and StrongARM processors and people who had worked on Intel’s Itanium, AMD’s Opteron, and Sun’s UltraSPARC. What Apple had bought was some of the world’s top experts in processor design.

This design team worked in secret for two years until 2010, when Apple introduced the iPad. Instead of using Samsung’s designs, it ran on something called the “A4”, the first SoC designed internally at Apple (Samsung still manufactured the chip). It ran at 1 GHz and used the newer ARM Cortex A8 architecture as a starting point. The Cortex designs were significantly upgraded from the old ARM11 core that had powered the first iPhone. They had come a long way from the original ARM CPU!

Arm

The reveal of the A4 chip didn’t cause any big stirs in the CPU design community. It was seen as just a regular improvement on an existing mobile chip. Intel, for example, was busy promoting its high-end x86 desktop chips and attempting to reenter the mobile chip market with its low-power x86-based Atom. Other chip design companies, like Qualcomm, were having great success with their own ARM-based SoC designs, which found their way into many different Android products.

But a funny thing happened with these new Apple chips. The A4 was replaced with the A5 in 2011, doubling its CPU power and increasing its video chip speed significantly. Next year’s A6 did the same thing. Then, in 2013, the A7 was released. It was a fully 64-bit CPU, beating even ARM itself to the transition from 32 bits. It had a 64-bit instruction set, along with new, custom silicon that functioned as an image processor for the iPhone’s camera.

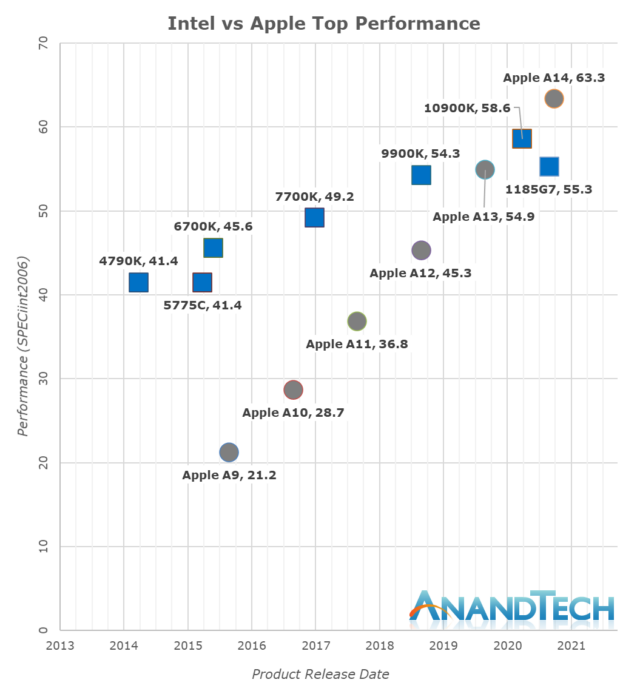

It seemed ludicrous to put a 64-bit CPU in a smartphone. Would a phone really ever need more than 4 gigabytes of RAM? But as time marched on, these arguments started to make less and less sense. And as the A7 gave way to the A8 through to the A12, something interesting was happening with the performance graphs of these mobile chips.

AnandTech

It was the release of the iPad Pro in 2018 that made people scratch their heads in puzzlement. Benchmarks on its A12 Bionic CPU showed that it was faster (per CPU core, at least) in some benchmarks than comparable Intel chips. This didn’t make any sense. How could a mobile chip be faster than a desktop one?

The answer was a conflation of many different factors. As we’ve seen already, the simplicity and elegance of the original ARM design gave these chips a leg up from the start in terms of performance—and especially performance per watt. Part of this elegance was due to the reduced instruction set computer (RISC) architecture, which had simpler CPU instructions and fewer of them than Intel’s complex (CISC) x86 chips.

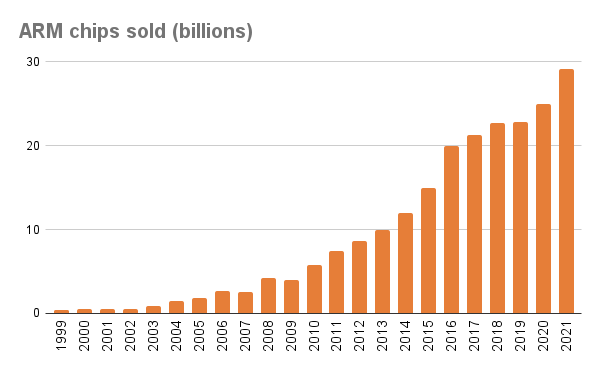

But Intel hadn’t been standing still over all these years. Starting with the Pentium Pro in 1995, the company added a hidden set of RISC-like micro-operations. Every time a programmer sent a regular x86 instruction to the CPU, it would be translated into these micro-operations internally. This meant the Intel chip could operate nearly as fast as the most powerful RISC chip. Any loss of speed from the x86 legacy baggage was overwhelmed by Intel’s massive economies of scale—the CPU giant was selling nearly 300 million CPUs a year by 2010. It had already beaten the other RISC CPUs, like SPARC, PowerPC, and MIPS. Even game consoles—which didn’t have to worry as much about legacy code compatibility between generations—had switched from PowerPC to x86 chips by 2013.

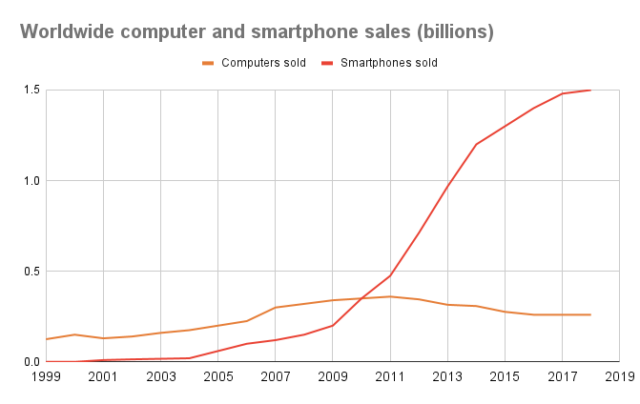

However, the global smartphone market was on a different scale entirely. Much of the world still struggled to afford a $2,000 personal computer, but a $200 smartphone was far more attainable. As a result, smartphone sales exploded, passing PC sales in 2010 and never looking back. By 2018, nearly 1.5 billion smartphones were sold each year. And after Intel gave up trying to get Atoms in smartphones, every single one had an ARM chip in it.

Jeremy Reimer

Now the economies of scale favored mobile chip manufacturing companies like Samsung and the Taiwan Semiconductor Manufacturing Company (TSMC). Apple invested billions of dollars into TSMC and moved all its chip manufacturing there. Intel couldn’t keep up from a fabrication standpoint. In 2020, Intel admitted it would have to delay its move from a 10 nanometer (nm) to a 7 nm process. In the meantime, TSMC leapfrogged to a 5 nm manufacturing process. While the process numbers started to lose their meaning at this scale, one thing was clear: Smartphone chips were ready to take the performance lead.

And in November 2020, they did. That’s when Apple released its M1 chips for the Macintosh computer line. These chips stunned the computing world—they were more powerful than the fastest Intel x86 CPUs, but they consumed a fraction of the power. ARM had always been a winner with performance per watt, but these chips were something else entirely.

Apple

I bought an M1-based MacBook Pro, and it felt like the first laptop actually deserving of the name. When I take it into work, I don’t even bother bringing the charger. No matter what programs I run, the fans never come on, and the battery never runs out. Inevitably, chips of this caliber will become available for Windows laptops as well. At that point, one has to imagine that Intel will be in real trouble.

After 35 years, ARM had come full circle. It started in 1985 as a chip for desktop computers—in particular, the Acorn Archimedes. But when the Archimedes failed to capture the market, the ARM chip was spun out into its own company in 1990. After a slow start, ARM became the standard for the embedded CPU market and made its way into popular mobile devices like the iPod, the Game Boy Advance, and smartphones like the iPhone and Android. Now, at long last, it was back in personal computers.

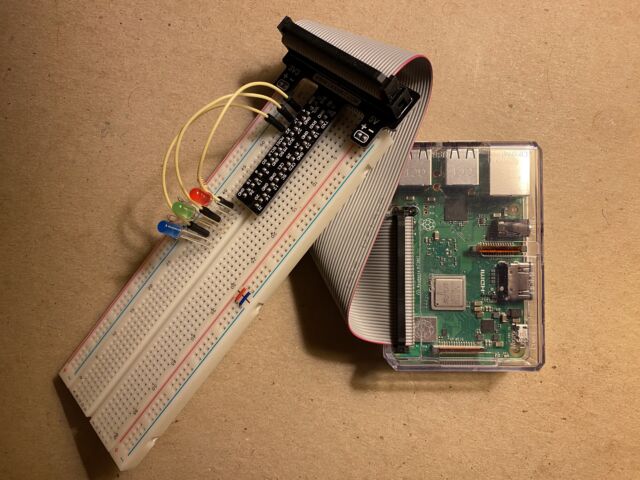

But the flexibility of ARM meant that these computers didn’t necessarily need to be expensive ones, like those sold by Apple. In 2009, the Raspberry Pi Foundation was registered in England, the home of the original ARM chip. Its mission was to continue the promotion of computer science in education, much as Acorn’s BBC computer did back in the 1980s. Given this storied lineage, there was only one processor the foundation would choose.

The first Raspberry Pi was released in 2012. It was a single-board computer the size of a credit card. The Pi sported an ARM11 processor, built-in memory, and every connector you would need for a computer: USB for mice and keyboards, a headphone jack, HDMI for a display, and Ethernet. It also came with a “General Purpose Input Output” or GPIO connector, allowing tinkerers to easily hook up and control lights, sensors, and motors. It cost $35. Subsequent models have gotten more powerful but not more expensive. The original mission of ARM, to bring computing power to the masses, had been achieved.

Jeremy Reimer

What it all meant

In 2006, Robin Saxby, the CEO of ARM, retired. He had planned it for some time. The company was in such good shape, and he managed the CEO transition so well, that ARM’s stock price didn’t even blink when the news came out.

I’ve written about computer history for many years, and one thing I’ve noticed is that superior technology rarely wins in the marketplace. The Amiga computer, also released in 1985, was easily 10 years ahead of its time, but its parent company collapsed, and the platform never recovered.

The story of ARM is very different. Although it took an unexpected path, the company founders succeeded beyond their wildest dreams. I had a theory about why this might be the case. To me, it seemed like both companies had brilliant engineers and superior technology but very different styles of management. To test my ideas, though, I had to find someone who was there and who knew everything that had gone down at ARM. I needed to talk to Saxby.

Saxby was delightful to talk to and generous with his time. He recounted the story of how he came to ARM and how, as a fellow engineer himself, he bonded immediately with the twelve founding engineers. He joked about how he selected specfic engineers to fill key positions, like marketing director and sales director, to save money. But in truth, he felt that it was easier to teach good engineers how to sell instead of the other way around. He also insisted that each founder be given stock options so that they could all share in the company’s success.

But the key to Saxby’s management approach was simple yet uncommon in the business world: ARM grew because it helped others grow. It treated its employees more like people and less like human resources, giving them chances to learn and succeed along with the company. “I’m a great believer that in any team,” he told me, “any member is better at something than somebody else, so to get the team to perform you want everyone to perform on their best axis. Teams that work well together work better.” He emphasized the importance of being honest with employees and not overpromising what the company had to offer.

ARM even saw its competitors as potential partners—when it helped its partners succeed, ARM also benefited. “Because Texas Instruments didn’t have the ideal processor to meet Nokia’s needs,” he said, “they were interested in collaborating.” This collaboration led to ARM chips becoming the standard for the mobile phone market.

ARM chip sales over time.

Jeremy Reimer

Let’s contrast this philosophy with Amiga’s parent company, Commodore. Its founder, Jack Tramiel, believed that “business is war,” and he fostered a management style whereby for Commodore to win, others had to lose. He was then ousted from power by an uncaring financier, who replaced him as CEO with a management consultant, Mehdi Ali. Ali knew nothing about engineering and didn’t want to learn. He sought only to enrich himself.

Commodore quickly went bankrupt, and Ali vanished into obscurity and died in ignominy. ARM, on the other hand, continued to grow and succeed. Saxby was knighted by Queen Elizabeth in 2002, retired with his company in fantastic shape, continued to inform and educate the public as a trusted advisor, became president of the Institution of Engineering and Technology (IET), and is beloved by everyone, including his grandchildren.

So if you’re an aspiring tech CEO and want to know which path you should take, the answer should be obvious.

But this lesson is for more than just CEOs. It applies to everybody. We live in unprecedented times, where the very technology that allowed us to communicate with everyone on the planet now threatens to divide us into bickering—even warring—factions. Yet it need not be this way. Recent triumphs like the James Webb Space Telescope were achieved through the collaboration of engineers and scientists from all over the world.

Sir Robin Saxby explained: “The reality is, if we look at our planet, in each country we have the best of the best in something. It’s only in collaboration that we get the best result. That’s the only way for the future.”

I agree completely.